Retrospectiva #5

Happy February! Just like that, we are close to wrapping up the first quarter of 2026. After quite some time roaming around, we finally flew back home to Copenhagen. It's cold, windy, and grey, but it's also calm, organized, and cozy. Most of all: it's home.

Another good thing about Denmark is that it's one of the flattest countries in Europe. Perfect for increasing weekly running volume. It's cold, but it's almost time to ditch the running tights! Just a couple months left.

Using

We've been going with Allegra pretty much everywhere. From small walks, to airplanes, to high speed trains. We've been lucky - she's really chill, and sleeps most of the time. But one of our best purchases was the Ergo Baby Omni 360. Vitto managed to find it second-hand at a nice price. But it's been absolutely great to take her everywhere. And it should last us many more months. A strong recommendation for parents out there.

Today is the day I canceled copilot.https://t.co/OPUIEX5eIe

— Duarte (@duarteocarmo) February 27, 2026

As for tech, two interesting things have been changing in my workflow (which is still unstable). The first is my increasing usage of coding agents for everyday things. For things that I find myself repeating more and more I've been using agent skills. Still bounce around different agent CLIs quite a bit, but I can largely reuse them across tools. For the past month, I've been using a mix of the Codex app, and the shitty-but-not-so-shitty coding agent Pi. The second interesting thing is the increasing capability of local LLMs. For example, I completely replaced my GitHub Copilot subscription as well, with my own fork of llama.vim, called llama.lua.

Reading

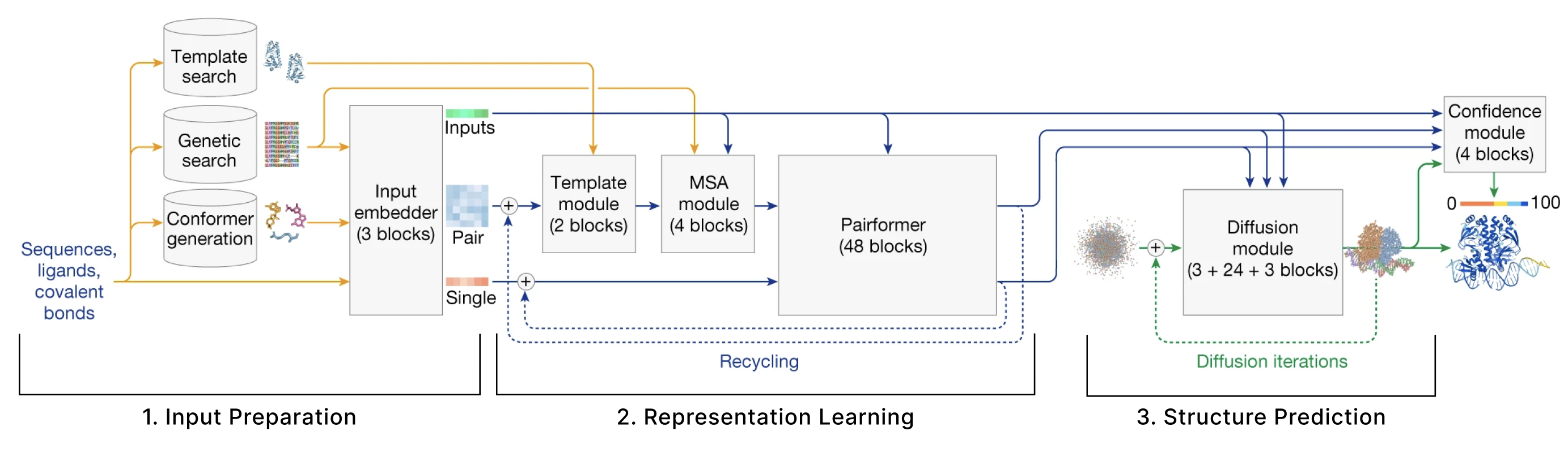

Two long read recommendations for this month. The first is The Illustrated AlphaFold by Elana P. Simon. She does a great illustrated rundown of the key inner workings of the famous algorithm from Google. Another recommendation is the latest article from Raschka: A Dream of Spring for Open-Weight LLMs: 10 Architectures from Jan-Feb 2026. It's funny how LLM architectures have changed - but really, not all that much.

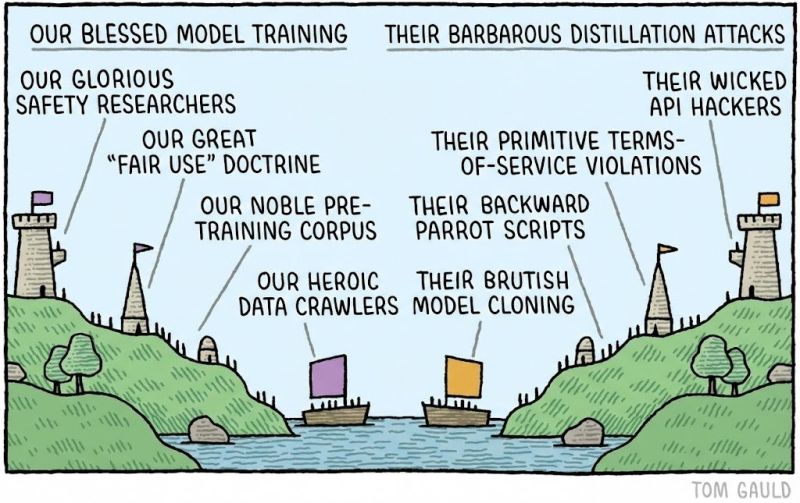

I also recommend the latest bomb from Anthropic accusing almost every Chinese lab of calling their API to distil their own models. Isn't it funny how large labs train their models on the entire Internet, but are then very much protective when someone starts doing the same with their API? In any case - didn't Anthropic do the exact same thing, but with book authors?

Pretty much finished with LLMOps from Abi Aryan. Interesting read to consolidate some of the ideas from everything I've been seeing in the field. This month I've picked up Essentialism from Greg McKeown, and The Art of Doing Science and Engineering from Richard Hamming. Not sure which one will stick yet.

Listening

I have three podcast recommendations for this month. Unsurprisingly - they are all very much about LLMs, AI, and the future of technology. But I'm sure you were already expecting that.

- The Evolution of Reasoning in Small Language Models with Yejin Choi (TWIML AI Podcast): I'm a big believer in the increasing power of small language models.

- The third golden age of software engineering (The Pragmatic Engineer): How the world of Software is changing, and what to focus on.

- Jared Sleeper on Which Software Companies Will Survive the “SaaSpocalypse” (Odd Lots): Software stocks have been dipping, why?

Watching

Every year, I try to completely avoid any sort of news related to Formula 1. I don't watch races during the year, I block out everything I possibly can about it. Why? Because I'm always waiting for the next season of Drive to Survive. You should too. Even if you're not a fan of cars (I've never been). But I'm a fan of competitive sports, and this show captures that essence flawlessly.

That's it for the February edition of Retrospectiva, see you next month!